Tech Update: AI Generators (Feb 2023)

Now the AI genie is in full flight, we’ve been anticipating the exponential growth in interest in creative applications – and also in the ethical and moral questions being asked. This month, we have not been disappointed! We start our review with some of the tools we’ve seen emerge and finish with a review of the legal situation that’s been taking shape over the last month, since our January update on the topic.

It takes Text-to-???

It seems most of the online world has bought into the hype around ChatGPT, and who can blame folks for wanting in on the action – it reached a million users faster than any platform has previously in the history of the internet ie., 5 days. Whilst it appears that Google and others have for once been caught sleeping on the job, Microsoft has stolen a march and helped OpenAI monetize its premium chat service for a mere $20/month (just a week after the extended partnership was announced and only if you are US-based) from which each partner will no doubt benefit massively. In the meantime, there has been a huge number of Chrome browser extensions launched based on ChatGPT for everything from search using voice commands, article summaries, writing Excel formulae, email assistance, LinkedIn comments management, to SEO optimization and a heap of other useful-ish applications. Go to the Chrome web store and search for the ones that will help with your creative pipeline – I’m sure someone somewhere will have thought of it before you.

I found a few uses for the YouTube summary assistants of which there are a couple of options, this being one (by Glasp) –

After adding the extension, it took a couple of seconds in total to transcribe the video, copy the text into my ChatGPT account and summarize an hour long interview I did with John Gaeta last year. This is the summary of that interview, which is a pretty good overview of what was discussed albeit the first part is almost verbatim from the intro –

The video is an interview with John Gaeta, who is known for creating the famous bullet time shot in the “Matrix” films. He won the Best Visual Effects Oscar for his work on the Matrix and co-founded Lucasfilm’s immersive entertainment division called ILMxLAB, where he acts as the Executive Creative Director. In the interview, he talks about his experience in creating a demo for the Sony PlayStation super computer, which was shown at Siggraph in 2000. He also mentions his interest in building big and complicated projects while also making entertainment products. Gaeta explains how the bullet time shot was a result of a philosophy they had during the Matrix trilogy of creating methods that might be used if one was making virtual reality. He also touches on how the rise of the internet and gaming helped audiences comprehend the shot better and how it carried on the underlying premise of the Matrix itself. (ChatGPT)

and here’s the full interview –

If, like me, you’re after nuggets and detail (my day job is as a researcher), then this won’t really help you but if you just want to get a sense of what’s being discussed, and you’re reviewing lots of material from various channels, or want a quick summary for promo, then its really a great way to generate an overview.

Creatively, though, we’re far more interested in the potential for Text-to-Otherstuff, such as 3D assets, video and 3D environments. Towards that end, although targeting game asset dev, Scenario.gg is a proposition (launched in 2019) that closed a round of significant seedcorn investment in January. With its creators’ backgrounds in gaming, AI and 3D technology, Scenario’s generative AI creates game assets using both image and text promps, albeit currently outputs are 2D images (see below). Its aim is to support creation of high fidelity assets, 3D models, sounds & music, animations, environments and more, based on users’ uploads of their own content (image and text description). The ownership model on generated content is interesting, which pushes the IP issue back to users since only images you have the right to use can be uploaded.

Scenario believes its product will cut creative production time for game artists (those who choose to work with AI). It surpassed .5M created images on 21 January, so is clearly gaining momentum. This is an interesting development, given comments by Aura Triolo (an Independent Games Festival 2023 judge and animation lead at Ivy Road) in an article covering AI devs for metaverse and games here (by Wagner James Au on his New World Notes blog, published in mid December). Triolo makes the point that time savings probably won’t be worth the effort given how much additional work is required to refine 3D models that AI generates, particularly in AAAs (as in the use of AI for procedural generation). That may well be true in a context where automation tools have been used for some time but this type of toolset will surely benefit thousands of indies, and not just in gaming but also machinima and virtual production. Time will tell.

Meta AI has published a paper that discusses taking their text-to-video (MAV) generator one step further to 4D (NeRFs or neural radiance fields), referring to it as MAV3D (Make-a-Video-3D). It optimizes scene appearance, density and motion consistency from text-to-video, generates a view from any camera location and angle and can be composited into any 3D environment. MAV3D does not require any 3D or 4D data. It is not yet available as a tool to use but here’s the paper to read. We look forward to hearing more about this in due course.

Text-to-fashion? Well, maybe not just yet, however ReadyPlayerMe, which is a cross platform avatar creator, has a new feature on its recently launched ‘labs’ web platform. Currently in beta and free, it allows you to customise avatar outfits using DALL-E’s generative AI art platform for text prompts. After faffing for a few minutes, I created this (but the hair is still a mess!) –

Checking out the new @readyplayerme AI generated custom avatar feature – neat! https://t.co/b5VDgvdzYl

— CompletelyMachinima (@CompletelyMach1) February 5, 2023

Text-to-music is an interesting area. There are no doubt going to be lots of training issues emerge with this type of AI, however, what fascinates me with Google‘s MusicLM is the ability to generate music from rich captions, using a ‘story mode’ (with a sequencer) and even from descriptions of paintings, places and epochs. I don’t think I’ve ever heard anything quite like the piece it generated for Munch’s The Scream, using a description by Iain Zaczek – not exactly melodic but certainly evocative of the artwork. It will also let you hum something and then apply a specific instrument to hear it played back, apparently. There is currently no API through which you can test your own ideas, however, but go to the github page here and check out the samples reported in the paper. Google it seems is only making the dataset of MusicCaps comprising 5.5k music-text pairs available, which includes rich text descriptions provided by human experts, and has obviously decided to let someone else create the API and take the rap with it. It will no doubt be one of many in due course, but there are some great ideas presented in the paper worth checking out.

Curation and Discovery

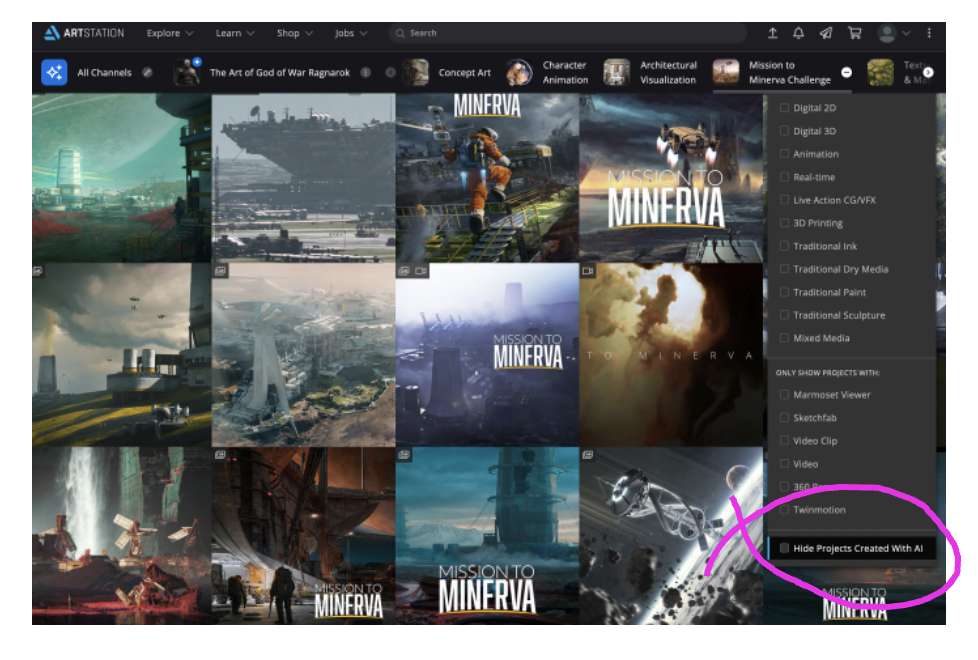

Curating content is one of the perenial problems of the internet – and its a problem that is getting more challenging because even with so much effort being put into the creator toolsets, no one is really paying much attention in the creator context of how work can be discovered (unless of course there is advertising embedded in it, which is a whole different agenda). One can only hope that when advanced AIs are embedded within search engines, new opportunities for content discovery will emerge – sadly, however, I suspect this will result in an even deeper quagmire, leaving it to the key platforms to find a way through. Related to which, Artstation has now improved its AI search and browsing filters – it can hide artwork generated with AI in search and marketplaces and thereby ‘make it easier to discover and connect with creators most relevant to you’, but strangely it doesn’t promote only work created with AI in search.

On the matter of curation, a website for AI generators has launched, called All Things AI – AI developers can submit their tool to the site for its potential inclusion. The site has been developed by Rick Waalders, and whilst there are numerous AI tools and services on the site, there’s not much information yet about its creator or indeed reviews of the AIs themselves. If the site takes off, it might just be the place to find the apps you want – time will tell. Until then, blogs such as Pinar Seyhan-Demirdag‘s Medium post, dated 11 January, are great sources for curated content. In this post, Pinar lists more than a dozen 3D asset and scene generation models – a very useful summary, thanks. Now, what we really need is a Fandom wiki for AIs…!

The Legals are Circling

What a busy month it has been in lawyerland.

On 17 January, in San Francisco US, a class action suit was filed on behalf of three artists. It claims that Stability AI’s Stable Diffusion and DreamStudio, MidJourney and DeviantArt have colluded in the use of an AI that has been trained on scraped content that infringes the rights of copyright holders (the AI being created by a company called LAION which has connections to Stability AI) and that the results of its application by users has a detrimental impact on the artists making profit from their own work as a consequence. One of the legal team has written a detailed blog about the action here, and here is the link to the action, should you want a quick scan through its 46 pages. The following day, 18 January, Getty Images stated that it has commenced proceedings against Stability AI in the High Court of Justice in London –

… Stability AI infringed intellectual property rights including copyright in content owned or represented by Getty Images. It is Getty Images’ position that Stability AI unlawfully copied and processed millions of images protected by copyright and the associated metadata owned or represented by Getty Images absent a license to benefit Stability AI’s commercial interests and to the detriment of the content creators.

Getty Images believes artificial intelligence has the potential to stimulate creative endeavors. Accordingly, Getty Images provided licenses to leading technology innovators for purposes related to training artificial intelligence systems in a manner that respects personal and intellectual property rights. Stability AI did not seek any such license from Getty Images and instead, we believe, chose to ignore viable licensing options and long‑standing legal protections in pursuit of their stand‑alone commercial interests.

No more specifics are available on the Getty case. The latter comments are particularly interesting, however, given its stance with creative contributors whom it has banned from uploading AI generated content, something we highlighted in our December blog post.

On the US class action, notwithstanding the technicalities of its description of how the AI works (which some have already questioned as being incorrect), the action is primarily about two aspects of copyright infringement – one related to a company which is ‘licensing images’ for use in training the AIs (that a couple of the image generating companies are using); the other is the specific use of an artist’s name to generate an image ‘in the style of …’ which suggests that person’s specific work, tagged presumably with their name, has been used without their permission to train the AI. Those using the images ‘in the style of x’ are referred to as ‘imposters’ whom it is being argued are contributing to the fake economy (which different governments are currently trying to control). The suit is not against the imposters but those who allow imposters to profit.

The action holds that the companies ‘scraping’ the images (which is a metaphor for how images are actually used in the diffusion process) could provide a means to seek permission from those artists used but it has not done so because it is ultimately expensive and takes time to do. The action is for compensation of damages for lost revenue and damage to brand identity of artists. The premise for this is that the companies being sued are generating huge amounts of money that is not finding its way to those contributors whose work is used in the training processes. The money flows are therefore the areas where the ‘fair use doctrine’ is being brought to bear.

The substantive legal issue, however, seems to centre on transformative works (from derivative works). Corridor Crew has produced a nice summary in a video with California State attorney Jake Watson explaining how AI probably DOES transform the work sufficiently for its use of copyrighted images to constitute FAIR USE. So it still comes down to what fairness means in terms of money flows, ultimately. Here’s the video –

And for a line by line commentary on another perspective of the class action blog post by one of the artists’ legal team, a response has been created by a group of ‘tech enthusiasts uninvolved in the case, and not lawyers, for the purpose of fighting misinformation’. I hesitated to include this link, especially since the author/s are anonymous and the contact link is to an ancient Simpsons video sniping at profiteering lawyers, but it makes some interesting points and is well referenced.

It generally sounds like there is a fix to this problem, and its one we’ve highlighted in previous posts on this topic, where AI generator platforms pay artists to use their work… oh, wait, isn’t Shutterstock already doing that, working with OpenAI’s DALL-E? Yes, and here is its generator – it pays the artists (I couldn’t find how much) and users pay for the images downloaded ($19/month for 10 images or 1 video). The wicked problem here, Techcrunch argues, is will folks be willing to pay for AI generated artwork, suggesting yes they will if the generator service has the best selection, pricing, discovery, and overall experience for the user and the artist. And, DreamStudio Pro is already a paid for service that folks are using.

Or, you can opt out of your art being included in Stable Diffusion’s AI, using the third party HaveIBeenTrained web service by an artist-based startup called Spawning. It looks as though there have been a few problems using this service according to the comments, such as being able to tag anyone’s imagery, but even more surprising was that not many seem to have viewed the support video in comparison to all the hoo-haa in the media on this topic. Check out this video –

Alternatively, you can just head over to LAION’s website and opt out there (scroll to the bottom of the page), following the long-winded GDPR processes under the EU reparation system.

In the meantime, Artstation has updated its T&Cs to make it clear that scraping, reselling or redistributing content is not permited and furthermore, it has committed to not licensing content to AI generator platforms for training purposes. Epic’s stance is always interesting to note, but its business model is not tied to just this one type of offer as the other platforms are.

And finally on the legals this month, we were intrigued to note that the US Copyright Office appeared to have cancelled the registration of the first AI generated graphic novel, called Zarya of the Dawn (our feature image for this article, by Kristina Kashtanova), claiming it had ‘made an error’ in the registration process… turns out that they are still ‘working on a response’, stating their portal is in beta. This was not before the artist had made an extensive response to the apparent cancellation through her lawyer, Van Lindberg. Its worth taking a few minutes to read the claims for originality, using MidJourney to support her creative processes, here. In sum, the response states –

… the use of that tool does not diminish the human mind that conceived, created, selected, refined, cropped, positioned, framed, and arranged all the different elements of the Work into a story that reflects Kashtanova’s personal experience and artistic vision. As such, the Work is the result of human authorship…

So this is yet another situation where the outcome is awaited. More next month for sure!

Recent Comments